Builders Buy Software Too

Architectural literacy is the skill legal leaders are missing at the procurement table.

I ran into an old friend this week, a CLO at a large company. We went and grabbed a coffee and her first comment about my Substack was "So you're telling me to stop buying software and go write my own intake tool at night?"

No. Its a little more nuanced that, and yet, yes. Yes, I want you to vibe code a tool.

Builders across every industry buy software constantly. They pay for licenses. They pay for platforms. They stitch together off-the-shelf pieces without a second thought. What makes them builders isn't that they write every line of code. It's that they can read the architecture of what they're buying, argue with the vendor on the merits, and decide with their eyes open which layers to own and which to rent.

The point of becoming a builder isn't self-sufficiency. It's the ability to learn these new systems so you get to choose.

The problem: passive buyers in a market that rewards active ones

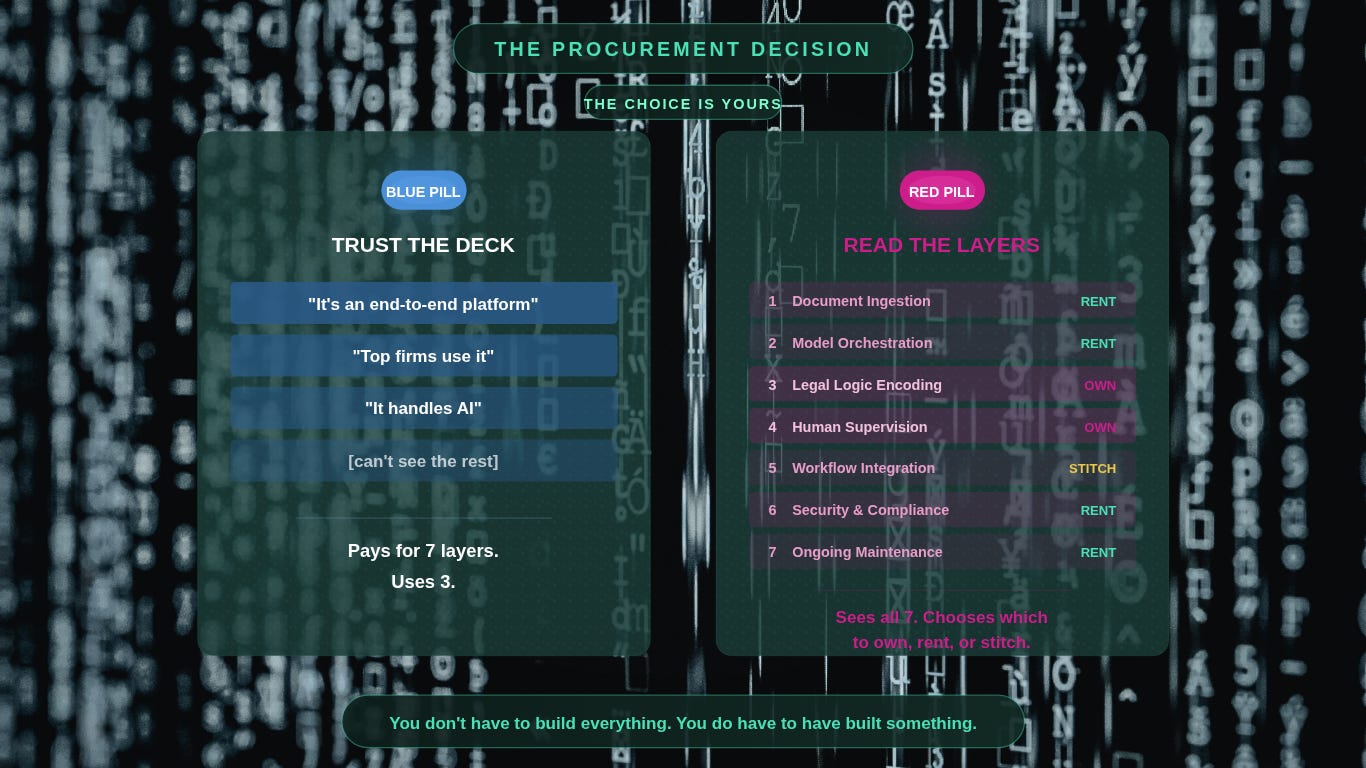

The legal market right now is a vendor carnival. Every week a new AI tool is pitched as the one that finally solves contract review, intake, due diligence, matter management, discovery. Partners and GCs are being asked to make procurement decisions on technology they cannot evaluate from the inside. So they default to what feels safe: wait, read an analyst report, trust the deck, buy what the peer firm bought. It's the expensive choice dressed as the safe one.

How most legal leaders solve it

Two default moves, and you've seen both.

First is vendor trust by proxy. The firm signs whatever the largest peer firm signed six months ago. The GC picks the platform with the most recognizable logos on the website. Nobody can tell you why the product works or where it breaks, but the procurement memo writes itself.

Second is the opposite, and it's what the GC at the conference was worried about. Somebody reads a post like this, decides they've been told to become engineers, and starts building from scratch because "that's what a builder does." A month later they have a half-finished intake form, a Zapier chain held together with sticky notes, and a growing conviction that this was all a mistake.

Both moves share a root cause. Neither person can read the architecture.

Why it breaks

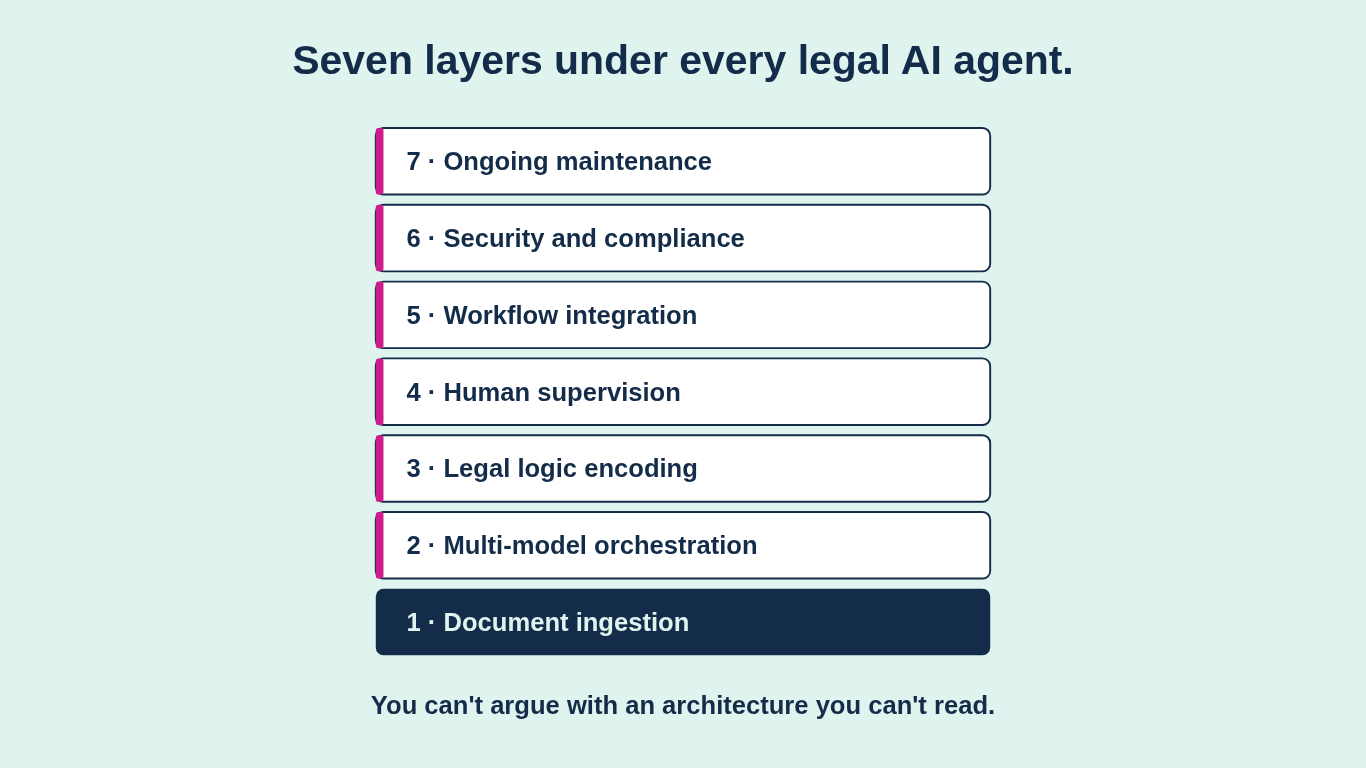

A useful piece from Flank lays out what it actually takes to ship a production-grade legal AI agent end to end. Seven engineering layers sit under the hood: document ingestion, multi-model orchestration, legal logic encoding, human supervision, workflow integration, security and compliance, ongoing maintenance. Four to six engineers for twelve to eighteen months, then two or three permanently, before a single contract is processed.

For full-stack production legal AI at enterprise scale, I agree with where they land. Buy. The economics don't pencil out for anyone who isn't already running real engineering teams. But as is always the case, that's half the story and the half vendors like you to stare at.

The interesting half is that you can only agree or disagree with any of this on the merits if you can read the seven layers. The builder-minded lawyer says, "Ingestion and compliance I rent, legal logic encoding I keep in-house because it's my edge, supervision I design myself." The passive lawyer says, "The vendor told me it's a platform." One of those people makes a better decision every quarter for the next ten years.

A Tuesday afternoon in a GC's office

Here’s a story that every lawyer reading this will viscerally feel. It’s a little bit of lack of knowledge, a little bit of nobody gets fired for hiring IBM, and a little bit of fear. I’ve been in the room when this decision is being made more times than I can count, and it’s almost always the same.

A GC walks into her office Tuesday afternoon with three options on her desk for a contract intake system. A six-figure vendor with a polished demo and a great logo wall. A scrappier platform with better hooks. A “Frankenstein” form builder, workflow engine, and retrieval layer stitched together by a contractor. Six months earlier she’d have picked the first without reading past the executive summary. She’d have paid for seven layers and used three.

Same GC, different quarter. She reads up on what actually goes into shipping production legal AI and makes her team draw the seven layers on a whiteboard for each option.

The first owns all seven and charges accordingly. The second owns five and opens the other two to her team. The Frankenstein owns two well and leaves her exposed on security and maintenance. Her head of legal ops says: “We’ve been evaluating features. We should have been evaluating layers.” She buys the second.

On the procurement call she names which of the seven layers she’s actually paying for and which she plans to own herself. The vendor’s rep stops reading from the deck and starts answering real questions. The contract closes on terms her legal team understands and can leverage.

She doesn’t write a single line of code on this deal. But the only reason she can run that room is that the quarter before, she spent two weekends building a clause extractor on her own laptop, just to see what broke. That’s where the vocabulary came from.

Building is the learning

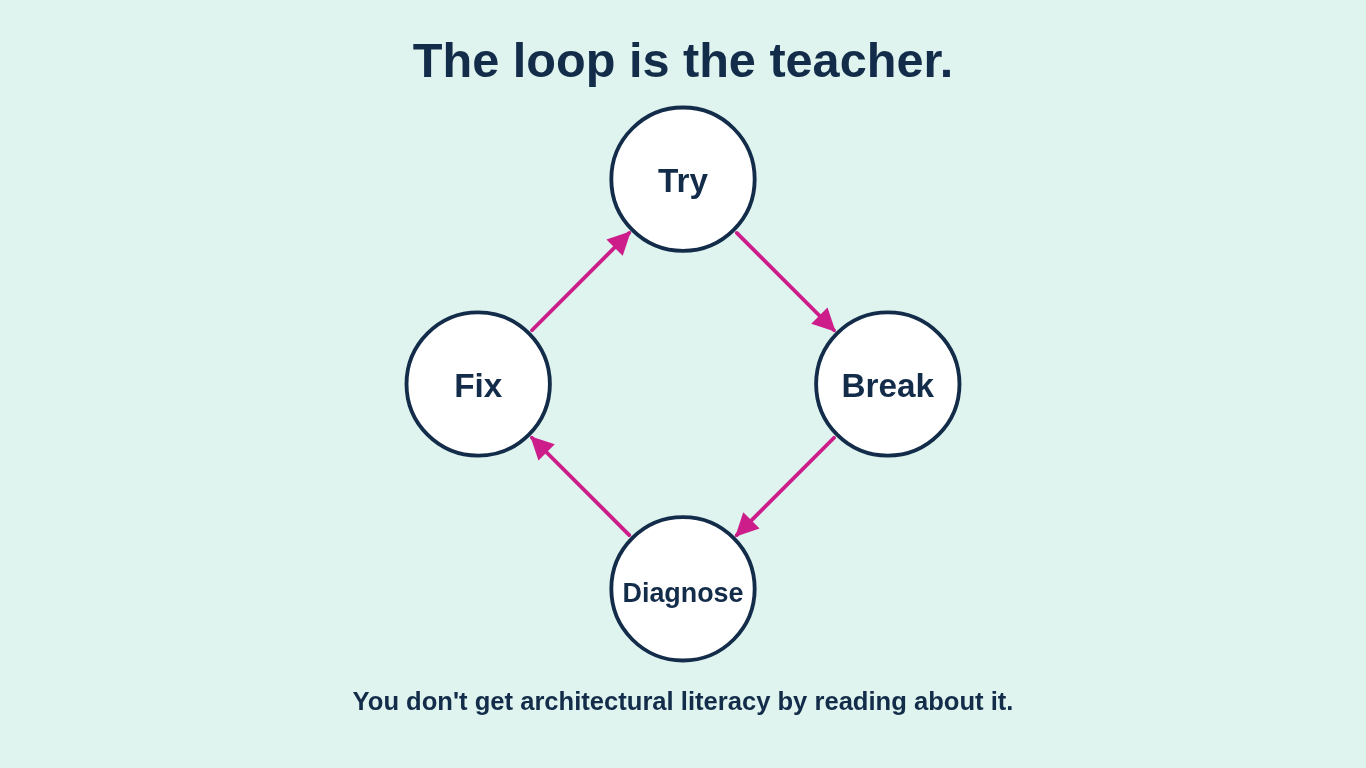

That last detail is the whole point, and it's the part most lawyers miss when I tell them to operate like builders. I'm not telling you to build because building is an end in itself. I'm telling you to build because it is, by a wide margin, the fastest way to actually learn this stuff. You try something. It breaks. You find out why. You fix one thing. It breaks somewhere else. You learn what ingestion actually means the first time a scanned PDF comes in sideways. You learn what retrieval actually means the first time your model confidently cites a case that does not exist. The loop is the teacher.

Every serious study of how people actually learn points the same way. Freeman and colleagues' 2014 PNAS meta-analysis pooled 225 studies of active versus passive learning in undergraduate STEM classrooms. Students in traditional lectures were one and a half times more likely to fail. The setting is undergraduate biology, not senior legal, but the failure mode is identical: passive exposure to an unfamiliar system does not build a working model of that system. Deslauriers and colleagues followed up in 2019 with a result that should make every senior lawyer uncomfortable: students in active classrooms learned measurably more but felt they learned less than their passive-lecture peers. The passive stance feels more competent. It isn't.

The one I come back to most is Dell'Acqua and colleagues' 2023 study of 758 BCG consultants using GPT-4. Inside the model's frontier, AI users finished 12.2% more work, 25.1% faster, at 40% higher quality. Outside the frontier, the same users were nineteen points less likely to get the right answer. The difference between winners and losers wasn't raw intelligence. It was whether they interrogated the model or trusted it. The interrogators won. The trusters got confidently wrong answers at speed. "Interrogated the model" is just another way of saying layers already know, the socratic method. Interrogate like a builder by treating procurement like something to build with instead of something to buy from.

You do not get to architectural literacy by reading about it. You get there by breaking things and fixing them on a short loop. Pin that to the wall.

What being a builder actually means

You can read the seven layers. When a vendor rep says "our platform handles that end-to-end," you can ask which layer and hear the answer for what it is. Your build-versus-buy conversation stops being a gut call and starts being a portfolio decision: this layer we own because it's our edge, this one we rent because it's a commodity, this one we stitch because nothing off the shelf fits.

And here's the other half of the picture nobody in the vendor carnival wants you to notice. A lot of the tools lawyers actually need every day are not full-stack production systems. They are small, scoped, and local. A clause extractor that runs on your own laptop. A retrieval layer inside your firm's existing Microsoft or Google tenancy so nothing crosses a confidentiality line. A triage assistant running on a local model so privileged material never leaves the perimeter. The stack under these is mundane: a Python notebook or a Google Apps Script, an open-source embedding model, a form your team is already using, and an afternoon of pairing with the AI coding assistant your firm is already paying for. Two weekends of work, not twelve months. No engineering team. No £500K run rate. I've built some of these. Lawyers I work with have built more. They don't replace the big platforms. They're the reason you can tell when the big platforms are being oversold.

Being a builder doesn't mean you build everything. It means you can build the small thing when the small thing is the right answer, and you can read the architecture of the big thing when you have to buy it. That optionality is the entire point.

You don't have to build everything. You do have to have built something. Everything else follows.